nf-core/chipseq

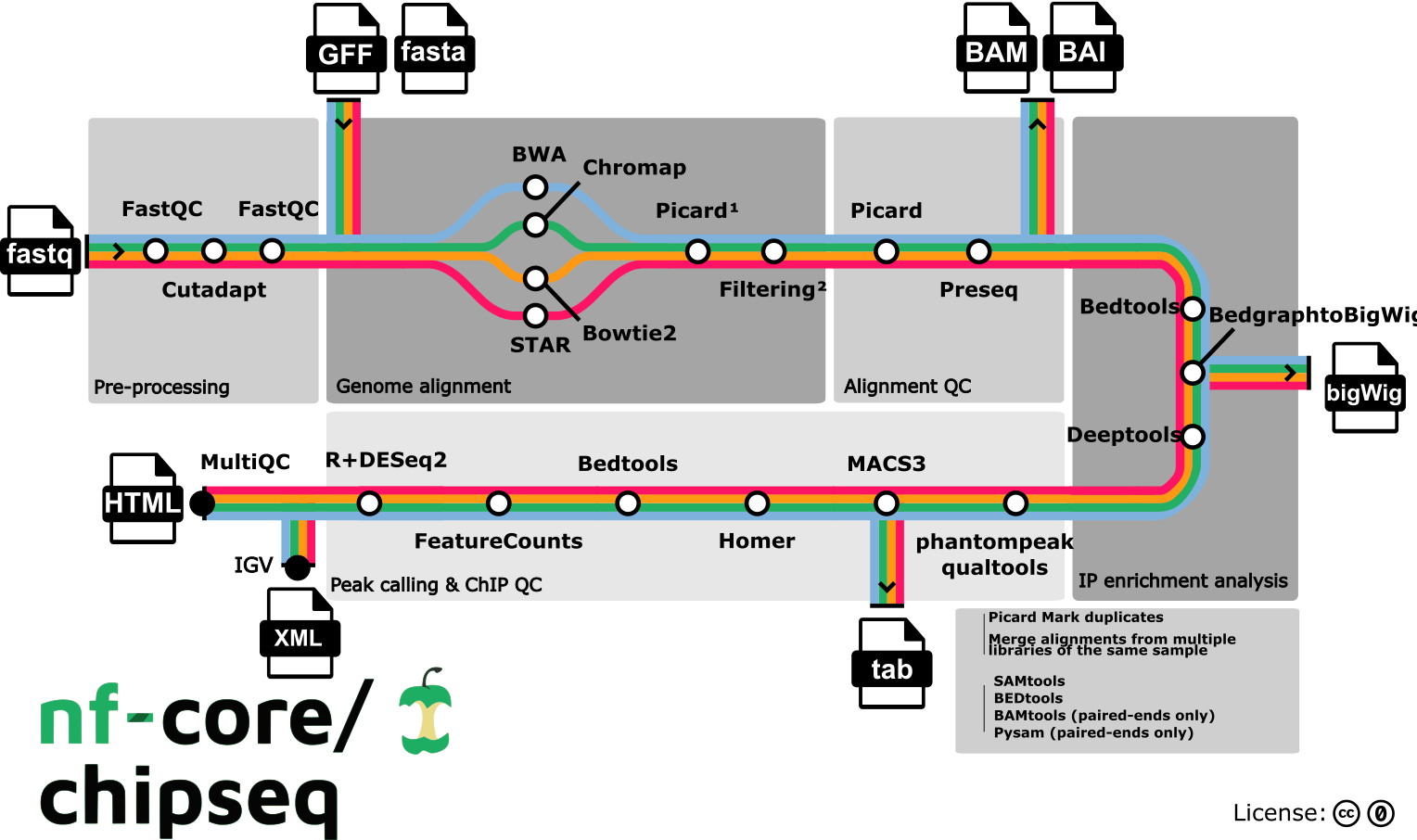

ChIP-seq peak-calling, QC and differential analysis pipeline.

Introduction

nfcore/chipseq is a bioinformatics analysis pipeline used for Chromatin ImmunoPrecipitation sequencing (ChIP-seq) data.

On release, automated continuous integration tests run the pipeline on a full-sized dataset on the AWS cloud infrastructure. The dataset consists of FoxA1 (transcription factor) and EZH2 (histone,mark) IP experiments from Franco et al. 2015 (GEO: GSE59530, PMID: 25752574) and Popovic et al. 2014 (GEO: GSE57632, PMID: 25188243), respectively. This ensures that the pipeline runs on AWS, has sensible resource allocation defaults set to run on real-world datasets, and permits the persistent storage of results to benchmark between pipeline releases and other analysis sources. The results obtained from running the full-sized tests can be viewed on the nf-core website.

The pipeline is built using Nextflow, a workflow tool to run tasks across multiple compute infrastructures in a very portable manner. It uses Docker/Singularity containers making installation trivial and results highly reproducible. The Nextflow DSL2 implementation of this pipeline uses one container per process which makes it much easier to maintain and update software dependencies. Where possible, these processes have been submitted to and installed from nf-core/modules in order to make them available to all nf-core pipelines, and to everyone within the Nextflow community!

Online videos

A short talk about the history, current status and functionality on offer in this pipeline was given by Jose Espinosa-Carrasco (@joseespinosa) on 26th July 2022 as part of the nf-core/bytesize series.

You can find numerous talks on the nf-core events page from various topics including writing pipelines/modules in Nextflow DSL2, using nf-core tooling, running nf-core pipelines as well as more generic content like contributing to Github. Please check them out!

Pipeline summary

- Raw read QC (

FastQC) - Adapter trimming (

Trim Galore!) - Choice of multiple aligners

1.(

BWA) 2.(Chromap) 3.(Bowtie2) 4.(STAR) - Mark duplicates (

picard) - Merge alignments from multiple libraries of the same sample (

picard)- Re-mark duplicates (

picard) - Filtering to remove:

- reads mapping to blacklisted regions (

SAMtools,BEDTools) - reads that are marked as duplicates (

SAMtools) - reads that are not marked as primary alignments (

SAMtools) - reads that are unmapped (

SAMtools) - reads that map to multiple locations (

SAMtools) - reads containing > 4 mismatches (

BAMTools) - reads that have an insert size > 2kb (

BAMTools; paired-end only) - reads that map to different chromosomes (

Pysam; paired-end only) - reads that arent in FR orientation (

Pysam; paired-end only) - reads where only one read of the pair fails the above criteria (

Pysam; paired-end only)

- reads mapping to blacklisted regions (

- Alignment-level QC and estimation of library complexity (

picard,Preseq) - Create normalised bigWig files scaled to 1 million mapped reads (

BEDTools,bedGraphToBigWig) - Generate gene-body meta-profile from bigWig files (

deepTools) - Calculate genome-wide IP enrichment relative to control (

deepTools) - Calculate strand cross-correlation peak and ChIP-seq quality measures including NSC and RSC (

phantompeakqualtools) - Call broad/narrow peaks (

MACS3) - Annotate peaks relative to gene features (

HOMER) - Create consensus peakset across all samples and create tabular file to aid in the filtering of the data (

BEDTools) - Count reads in consensus peaks (

featureCounts) - PCA and clustering (

R,DESeq2)

- Re-mark duplicates (

- Create IGV session file containing bigWig tracks, peaks and differential sites for data visualisation (

IGV). - Present QC for raw read, alignment, peak-calling and differential binding results (

MultiQC,R)

Usage

If you are new to Nextflow and nf-core, please refer to this page on how to set-up Nextflow. Make sure to test your setup with -profile test before running the workflow on actual data.

To run on your data, prepare a tab-separated samplesheet with your input data. Please follow the documentation on samplesheets for more details. An example samplesheet for running the pipeline looks as follows:

sample,fastq_1,fastq_2,replicate,antibody,control,control_replicate

WT_BCATENIN_IP,BLA203A1_S27_L006_R1_001.fastq.gz,,1,BCATENIN,WT_INPUT,1

WT_BCATENIN_IP,BLA203A25_S16_L001_R1_001.fastq.gz,,2,BCATENIN,WT_INPUT,2

WT_BCATENIN_IP,BLA203A25_S16_L002_R1_001.fastq.gz,,2,BCATENIN,WT_INPUT,2

WT_BCATENIN_IP,BLA203A25_S16_L003_R1_001.fastq.gz,,2,BCATENIN,WT_INPUT,2

WT_BCATENIN_IP,BLA203A49_S40_L001_R1_001.fastq.gz,,3,BCATENIN,WT_INPUT,3

WT_INPUT,BLA203A6_S32_L006_R1_001.fastq.gz,,1,,,

WT_INPUT,BLA203A30_S21_L001_R1_001.fastq.gz,,2,,,

WT_INPUT,BLA203A30_S21_L002_R1_001.fastq.gz,,2,,,

WT_INPUT,BLA203A31_S21_L003_R1_001.fastq.gz,,3,,,Now, you can run the pipeline using:

nextflow run nf-core/chipseq --input samplesheet.csv --outdir <OUTDIR> --genome GRCh37 -profile <docker/singularity/podman/shifter/charliecloud/conda/institute>See usage docs for all of the available options when running the pipeline.

Please provide pipeline parameters via the CLI or Nextflow -params-file option. Custom config files including those provided by the -c Nextflow option can be used to provide any configuration except for parameters; see the docs here.

For more details and further functionality, please refer to the usage documentation and the parameter documentation.

Pipeline output

To see the results of an example test run with a full size dataset refer to the results tab on the nf-core website pipeline page. For more details about the output files and reports, please refer to the output documentation.

Credits

These scripts were originally written by Chuan Wang (@chuan-wang) and Phil Ewels (@ewels) for use at the National Genomics Infrastructure at SciLifeLab in Stockholm, Sweden. The pipeline was re-implemented by Harshil Patel (@drpatelh) from Seqera Labs, Spain and converted to Nextflow DSL2 by Jose Espinosa-Carrasco (@JoseEspinosa) from The Comparative Bioinformatics Group at The Centre for Genomic Regulation, Spain.

The pipeline workflow diagram was designed by Sarah Guinchard (@G-Sarah).

Many thanks to others who have helped out and contributed along the way too, including (but not limited to): @apeltzer, @bc2zb, @bjlang, @crickbabs, @drejom, @houghtos, @KevinMenden, @mashehu, @pditommaso, @Rotholandus, @sofiahaglund, @tiagochst and @winni2k.

Contributions and Support

If you would like to contribute to this pipeline, please see the contributing guidelines.

For further information or help, don’t hesitate to get in touch on the Slack #chipseq channel (you can join with this invite).

Citations

If you use nf-core/chipseq for your analysis, please cite it using the following doi: 10.5281/zenodo.3240506

An extensive list of references for the tools used by the pipeline can be found in the CITATIONS.md file.

You can cite the nf-core publication as follows:

The nf-core framework for community-curated bioinformatics pipelines.

Philip Ewels, Alexander Peltzer, Sven Fillinger, Harshil Patel, Johannes Alneberg, Andreas Wilm, Maxime Ulysse Garcia, Paolo Di Tommaso & Sven Nahnsen.

Nat Biotechnol. 2020 Feb 13. doi: 10.1038/s41587-020-0439-x.