nf-core/taxprofiler

Highly parallelised multi-taxonomic profiling of shotgun short- and long-read metagenomic data

1.0.0). The latest

stable release is

1.2.6

.

Introduction

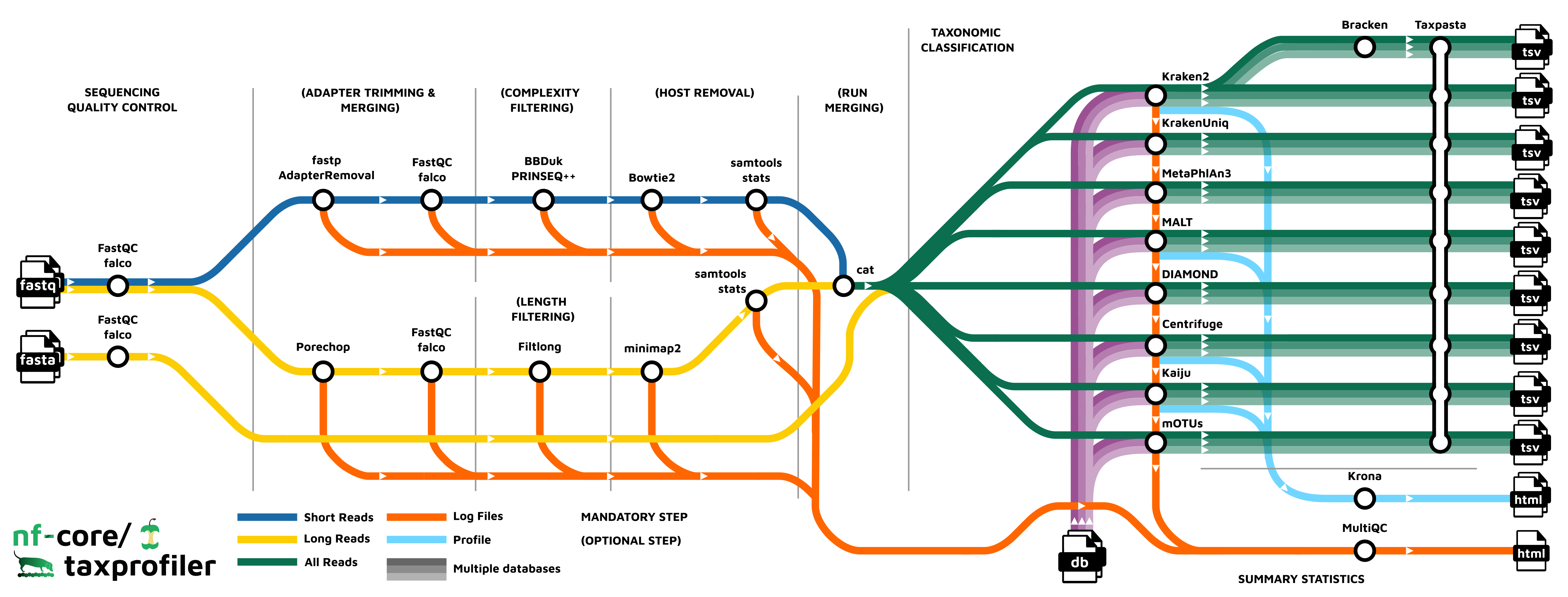

nf-core/taxprofiler is a bioinformatics best-practice analysis pipeline for taxonomic classification and profiling of shotgun metagenomic data. It allows for in-parallel taxonomic identification of reads or taxonomic abundance estimation with multiple classification and profiling tools against multiple databases, produces standardised output tables.

The pipeline is built using Nextflow, a workflow tool to run tasks across multiple compute infrastructures in a very portable manner. It uses Docker/Singularity containers making installation trivial and results highly reproducible. The Nextflow DSL2 implementation of this pipeline uses one container per process which makes it much easier to maintain and update software dependencies. Where possible, these processes have been submitted to and installed from nf-core/modules in order to make them available to all nf-core pipelines, and to everyone within the Nextflow community!

On release, automated continuous integration tests run the pipeline on a full-sized dataset on the AWS cloud infrastructure. This ensures that the pipeline runs on AWS, has sensible resource allocation defaults set to run on real-world datasets, and permits the persistent storage of results to benchmark between pipeline releases and other analysis sources.The results obtained from the full-sized test can be viewed on the nf-core website.

The nf-core/taxprofiler CI test dataset uses sequencing data from Maixner et al. (2021) Curr. Bio.. The AWS full test dataset uses sequencing data and reference genomes from Meslier (2022) Sci. Data

Pipeline summary

- Read QC (

FastQCorfalcoas an alternative option) - Performs optional read pre-processing

- Supports statistics for host-read removal (Samtools)

- Performs taxonomic classification and/or profiling using one or more of:

- Perform optional post-processing with:

- Standardises output tables (

Taxpasta) - Present QC for raw reads (

MultiQC) - Plotting Kraken2, Centrifuge, Kaiju and MALT results (

Krona)

Quick Start

-

Install

Nextflow(>=22.10.1). -

Install any of

Docker,Singularity(you can follow this tutorial),Podman,ShifterorCharliecloudfor full pipeline reproducibility (you can useCondaboth to install Nextflow itself and also to manage software within pipelines. Please only use it within pipelines as a last resort; see docs). -

Download the pipeline and test it on a minimal dataset with a single command:

nextflow run nf-core/taxprofiler -profile test,YOURPROFILE --outdir <OUTDIR>Note that some form of configuration will be needed so that Nextflow knows how to fetch the required software. This is usually done in the form of a config profile (

YOURPROFILEin the example command above). You can chain multiple config profiles in a comma-separated string.- The pipeline comes with config profiles called

docker,singularity,podman,shifter,charliecloudandcondawhich instruct the pipeline to use the named tool for software management. For example,-profile test,docker. - Please check nf-core/configs to see if a custom config file to run nf-core pipelines already exists for your Institute. If so, you can simply use

-profile <institute>in your command. This will enable eitherdockerorsingularityand set the appropriate execution settings for your local compute environment. - If you are using

singularity, please use thenf-core downloadcommand to download images first, before running the pipeline. Setting theNXF_SINGULARITY_CACHEDIRorsingularity.cacheDirNextflow options enables you to store and re-use the images from a central location for future pipeline runs. - If you are using

conda, it is highly recommended to use theNXF_CONDA_CACHEDIRorconda.cacheDirsettings to store the environments in a central location for future pipeline runs.

- The pipeline comes with config profiles called

-

Start running your own analysis!

nextflow run nf-core/taxprofiler --input samplesheet.csv --databases database.csv --outdir <OUTDIR> --run_<TOOL1> --run_<TOOL1> -profile <docker/singularity/podman/shifter/charliecloud/conda/institute>

Documentation

The nf-core/taxprofiler pipeline comes with documentation about the pipeline usage, parameters and output.

Credits

nf-core/taxprofiler was originally written by James A. Fellows Yates, Moritz Beber, and Sofia Stamouli.

We thank the following people for their contributions to the development of this pipeline:

Lauri Mesilaakso, Tanja Normark, Maxime Borry,Thomas A. Christensen II, Jianhong Ou, Rafal Stepien, Mahwash Jamy, and the nf-core/community.

We also are grateful for the feedback and comments from:

Credit and thanks also goes to Zandra Fagernäs for the logo.

Contributions and Support

If you would like to contribute to this pipeline, please see the contributing guidelines.

For further information or help, don’t hesitate to get in touch on the Slack #taxprofiler channel (you can join with this invite).

Citations

An extensive list of references for the tools used by the pipeline can be found in the CITATIONS.md file.

You can cite the nf-core publication as follows:

The nf-core framework for community-curated bioinformatics pipelines.

Philip Ewels, Alexander Peltzer, Sven Fillinger, Harshil Patel, Johannes Alneberg, Andreas Wilm, Maxime Ulysse Garcia, Paolo Di Tommaso & Sven Nahnsen.

Nat Biotechnol. 2020 Feb 13. doi: 10.1038/s41587-020-0439-x.